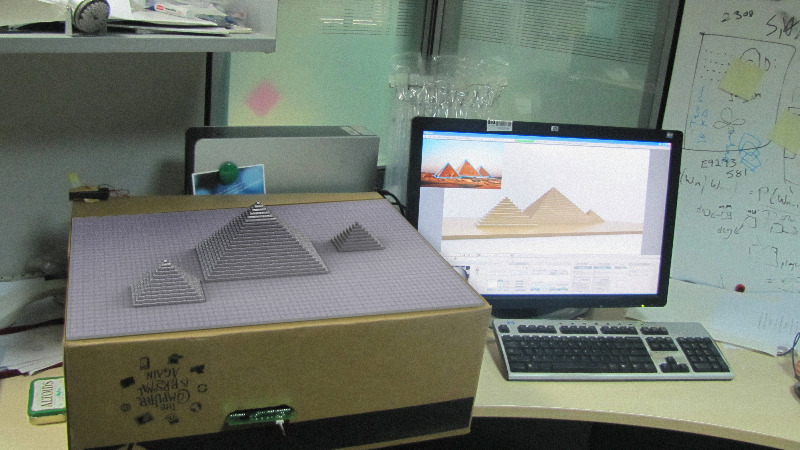

What if computer graphics of the present day could be physically touched and felt, in addition to being seen? Virtually rendered graphics displayed on a regular 2-D screen provide a rich visual feedback about the object. Present day touchscreen based devices employ direct manipulation of the screen elements, which are essentially a 2-D extension of the real 3D form. However, the feedback is limited by what the screen can render. We looked upon and found that there is a missing z-dimension without which a display lacks the stimulus for the sense of touch since there is no confirming tactile feel that buttons and UI controls provide when they are touched.

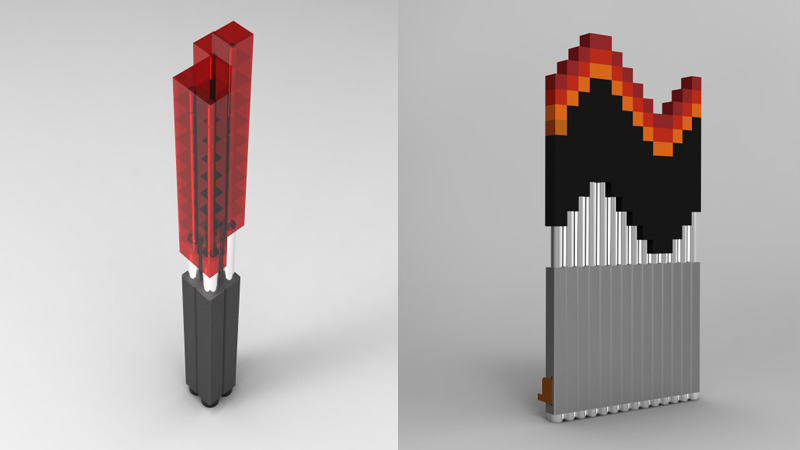

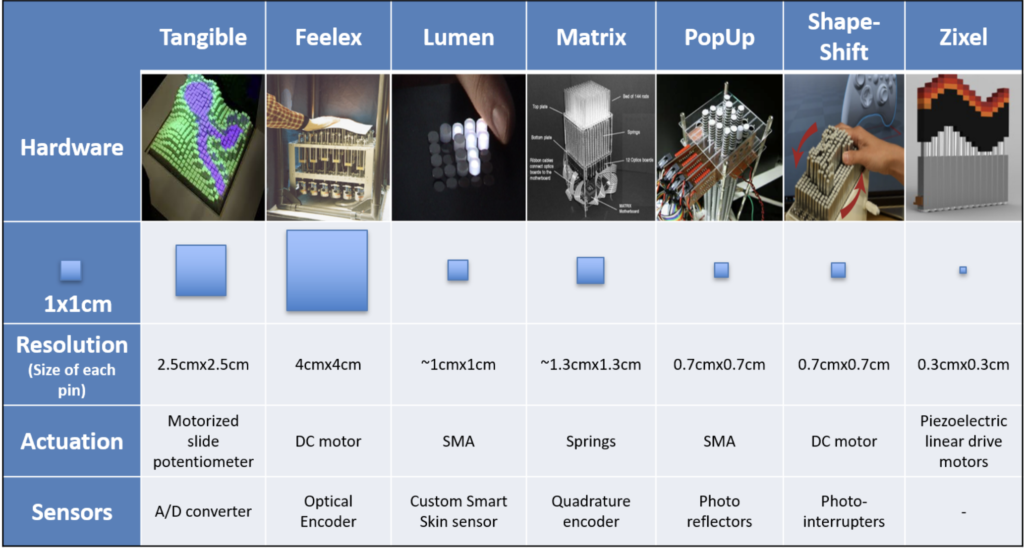

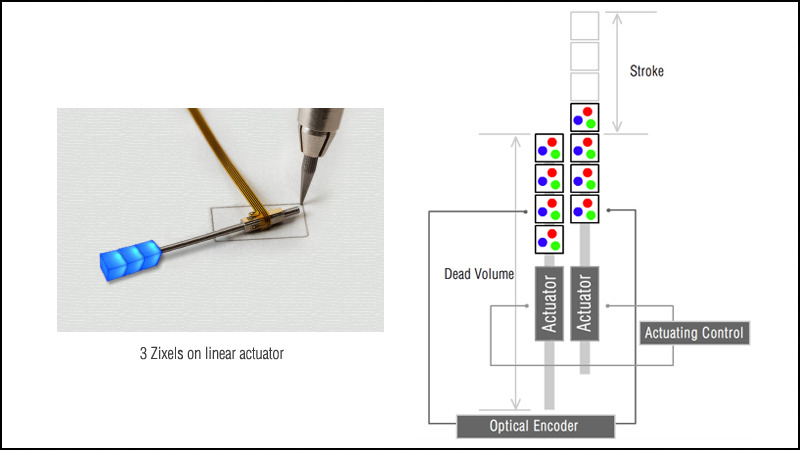

Interactive RGBZ(Red, Green, Blue, Z-axis) Pin-Art which is controlled by the computer and can render colored pixels. This project aims at extending the present 2-D displays into a 2.5 D form where graphics as well as haptic sensation could be directly communicated. We also define a basic actuated addition to present 2-D pixel form, a physical Z-axis, which caters to the physical manifestation of the virtual object for rich tactile and graphical feedback.

Update: TEI 2012 | SIGGRAPH ASIA 2011 paper.